Coding with AI

If you open your favorite news page you surely find ChatGPT and the underlying AI model GPT-3 and its pricier and more capable successor GPT-4 among the trending topics. There are lots of weird and funny applications and there AI-assisted coding is something that really made me curious.

So in this post I am going to collect my own user experience and thoughts about the implications and try to evaluate them based on white and later red hat thinking.

Pick two &

Slashdot alone lists three pages of tools and I had a tough time to select two services I want to give a spin during the course of this blog post.

I ultimately picked TabNine, because of its free tier and local options and Github's Copilotm due to my previous experience with it and its access to the vast amount of free training data. Their promise of code synthesis and obviously the inclusion of my own repositories accordingly to my settings:

What can they do? &

VSCode comes with nice integration for both of them and you are quickly ready to go once you’ve figured out the licensing.

In daily use the results of the code suggestions are nothing short of astonishing and can complete words, single sentences and also provide whole bodies for methods and functions. While TabNine produced suggestions for single lines, CoPilot was able to complete whole methods for me as can be seen in the following side-by-side animated GIFs:

| TabNine | CoPilot |

|

|

|

The suggestions aren’t limited to code alone and can also provide complete sentences. I wrote this blog post partially with the help of both until I found it somehow annoying, but more about this in the conclusion part.

My favorite quote to sum up the capabilities is probably this:

What GPT-4 Does Is Less Like "Figuring Out" and More Like "Already Knowing". Yes, It’s a Stochastic Parrot, But Most Of The Time You Are Too, And It’s Memorized a Lot More More Than You Have

Things to consider &

Next comes an unsorted list of thoughts about the whole idea with hopefully mostly rational white hat thinking and with my personal opinion limited to the conclusion part after that.

Time to market &

AI can rapidly speed up the creation of boilerplate code and repeated stuff which cannot easily be put into other artifacts or templates.

Training and recruiting &

Writing code is not easy to learn and if you aren’t well-versed in a given language the fail/success cycles can be long and frustrating.

AI can shorten these cycles and make programming in general more approachable for beginners, which by itself can also generate more interest and lure people into the business.

License fees and training costs &

Adding tools to the corporate world usually induces license fees and this applies here as well.

There a companies providing these services aplenty, but let us just check the price tags of the two handpicked services.

Service |

Individual costs (per month) |

Business costs (per month per user) |

CoPilot |

$10 (or $100 per year) |

$19 |

TabNine |

$0 Starter / $12 Pro |

Let’s talk |

Apart from the plain license and service costs there might be additional costs to train staff or to create company guidelines how to actually use this technology.

Copyright &

There are many pending lawsuits and copyright claims regarding the use of Stable Diffusion-based AI like DALL-E or MidJourney.

Normally, it is quite difficult to make assumptions about the actual training data, when the case isn’t that obvious like in the example posted on HackernNews, with an in-tact watermark from GettyImages:

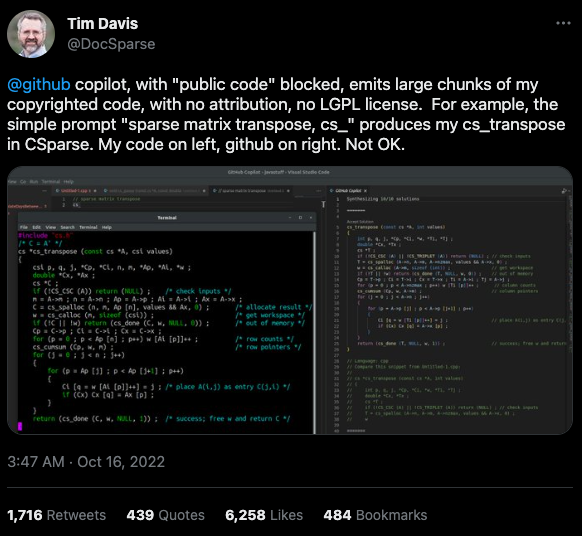

This is worse for software, when the original author can be identified easily of literally large parts of suggested code:

Isolated customer systems &

The effectiveness of the technology is limited by the amount and quality of the available training data, which can be quite limited in a closed environment.

When the data is hidden inside of closed customer systems there is usually no option to install non-approved software.

Code duplication &

When any AI assists suggest a solution to a code prompt, it has seen this somewhere else and where this else is, is something that is probably difficult to find out.

This might either lead to lots of code duplication or to coupling when the code is refactored to avoid this duplication.

Performance &

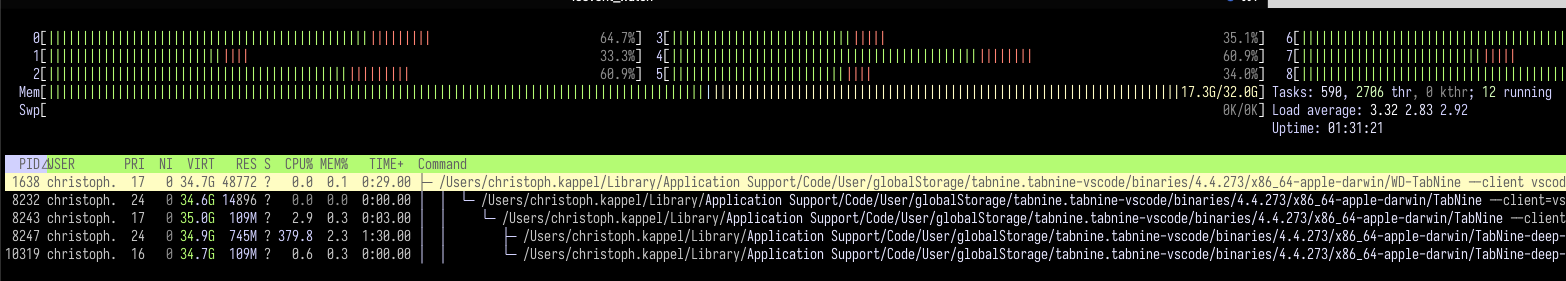

Many services provide multiple ways of using a large language model (LLM) - but it typically boils down to either run it locally or just use the cloud with more processing power and also more suggestions due to the availability of training data.

Dependent on the size of the actual data the requirements for compute might have measurable impact.

Following screenshot shows the processes of TabNine on my local machine while working on this blog post:

Also, there are quite few reports of problems about performance:

Security &

Re-using code can be a double-edged sword, especially when the actual source is unknown.

This is especially true for pages like StackOverflow, when you cannot be sure if the code was posted in the question or in the accepted answer:

Conclusion &

If you consider all of the mentioned points it it difficult to make your own mind about it and it is totally up to the goal you ultimately want to achieve.

For me, one of the weirdest sensations while writing this post was with ongoing AI-autocompletion the suggestions kind of change the way you express yourself and I am not sure if I really like it.

The old ways of using completion systems like Omnicompletion give good and reasonable suggestions and I don’t think my coding speed is somehow related to the speed I can type.

On the other hand any system that helps to reach the levels of the mythic 10x developer with coding super powers (I am not entirely sure, if this is solely based on the actual coded lines (hello COCOMO) or the quality of the code.) is pretty much worth any invest for business side.

The overall development of progress will surely have a big impact on our business and it is up to us to make the best of it:

Rauch likens the situation to GitHub providing a way of creating an “inline pull request,” where the submitter is an AI and you’re constantly reviewing their proposals, he said.